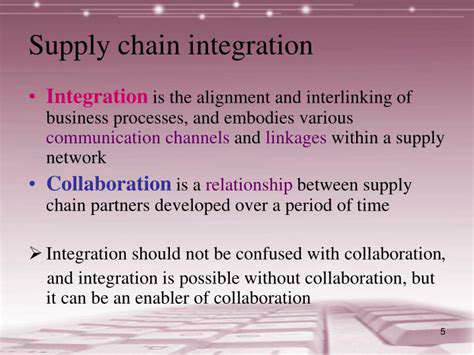

Building a Scalable Supply Chain Technology Infrastructure

Frontend technologies are responsible for the user interface, creating the visual experience and interaction with the application. Choosing the right frontend framework significantly impacts user experience, responsiveness, and overall app performance. React, Angular, and Vue.js are popular choices, each with its own strengths and weaknesses. Careful evaluation of these frameworks, considering factors like project complexity and team familiarity, is key.

Different technologies cater to different needs. For example, React excels in complex applications, while Vue.js might be a better choice for smaller projects due to its lightweight nature. The selected framework should not only meet current needs but also accommodate future development and potential feature enhancements.

Backend Technologies: Powering the Application Logic

Backend technologies handle the server-side logic and data management, providing the foundation for the application's functionality. The choice of backend language and framework impacts the application's performance, scalability, and security. Popular languages like Python, Java, and Node.js offer a range of frameworks and tools to build robust and efficient backend systems.

Factors like data storage needs, API design, and security considerations heavily influence the selection process. Thorough research and consideration of these crucial elements are essential for ensuring a reliable and secure backend. Choosing a stack that aligns with team expertise and project requirements is paramount.

Database Technologies: Storing and Retrieving Data

Choosing the right database is critical for data storage and retrieval. Relational databases like MySQL and PostgreSQL are well-suited for structured data, while NoSQL databases like MongoDB are ideal for unstructured or semi-structured data. The database system selected must effectively manage the volume and complexity of data projected for the application.

Testing and Deployment Strategies

Effective testing strategies are crucial for ensuring the quality and reliability of the application. Integrating robust testing frameworks into the development process helps identify and fix bugs early, minimizing potential issues in production. Deployment strategies must be carefully planned and implemented to ensure minimal downtime and smooth transitions to production.

Selecting the correct tools for deployment and continuous integration/continuous delivery (CI/CD) is also critical. Automated processes minimize errors and improve efficiency, allowing faster and more reliable releases.

Security Considerations in Technology Stack Selection

Security is paramount in any software project. A well-considered technology stack should prioritize security from the start, incorporating security best practices and Implementing robust security measures. This includes secure coding practices, regular security audits, and adherence to industry security standards.

Protecting user data and preventing vulnerabilities is paramount. Thorough security assessments and vulnerability scanning should be integrated into the development lifecycle, not viewed as an afterthought. This proactive approach minimizes potential risks and enhances the overall security of the application.

Implementing Robust Data Management Strategies

Defining Data Management Goals

A crucial first step in implementing robust data management is clearly defining the goals and objectives. This involves identifying the specific needs of your organization and how data will be used to achieve those goals. Understanding what data is required, where it comes from, and how it will be utilized is paramount for successful data management. This initial planning phase ensures that the chosen data management strategies are aligned with the overarching business objectives.

Defining these goals also involves establishing clear metrics for success, such as data accuracy, accessibility, and security. These metrics provide a benchmark for evaluating the effectiveness of the implemented strategies and allow for continuous improvement over time. Establishing these metrics will help you track and demonstrate the value of your data management efforts.

Choosing the Right Data Management Tools

Selecting appropriate data management tools is essential for efficient data storage, retrieval, and analysis. This involves evaluating various software solutions, considering factors like scalability, security features, and compatibility with existing systems. A well-chosen toolset will streamline the data management process, reducing manual errors and improving overall efficiency. Thorough research and vendor comparisons are vital for making informed decisions.

Different tools cater to different needs. For instance, some tools excel at data warehousing, while others are better suited for data visualization. Careful consideration of the specific requirements of your organization will help determine the optimal tools for data management.

Ensuring Data Integrity and Accuracy

Maintaining data integrity and accuracy is critical for reliable decision-making. This involves establishing clear data validation rules and procedures to ensure data quality and prevent errors. Implementing robust validation checks helps to minimize inconsistencies and inaccuracies in the data, safeguarding against flawed insights and decisions. Regular data audits are crucial for identifying and correcting any discrepancies.

Data cleansing procedures should be implemented to address existing data quality issues. These procedures could involve correcting errors, standardizing formats, and removing redundant or outdated information. This process ensures that the data is in a consistent format and ready for analysis, leading to more accurate and reliable insights.

Implementing Data Security Protocols

Protecting sensitive data is paramount in today's digital landscape. Data security protocols should be implemented to safeguard data from unauthorized access, breaches, and loss. This includes using strong encryption methods, implementing access controls, and regularly backing up data. Robust security measures mitigate the risk of data breaches and safeguard the confidentiality, integrity, and availability of sensitive information.

Regular security audits and vulnerability assessments are necessary to ensure the effectiveness of the implemented protocols. This proactive approach helps to identify potential weaknesses and address them before they can be exploited. Staying updated with the latest security best practices is essential for maintaining a secure data environment.

Establishing Data Governance Policies

Effective data management requires clear governance policies to ensure data quality, security, and compliance. These policies should outline roles and responsibilities for data management, establish data access controls, and define standards for data storage and usage. These policies are crucial for ensuring consistent data practices across the organization and promoting responsible data handling.

Documentation of these policies is essential for maintaining transparency and accountability. Clear communication of these policies to relevant stakeholders is also vital. This ensures that everyone understands their roles and responsibilities in upholding the organization's data governance framework.

Training and Support for Data Management Staff

Investing in training and support for data management staff is essential for successful implementation. This includes providing training on data management tools, best practices, and relevant regulations. Empowering data management professionals with the necessary skills and knowledge ensures that data is handled effectively and efficiently.

Providing ongoing support and resources to the data management team is equally important. This can include access to technical assistance, documentation, and training materials. A supportive environment fosters continuous learning and improvement, contributing to the long-term sustainability of the data management program.

Maintaining a clean interior environment is crucial for both health and well-being. A clean space reduces the presence of allergens, dust mites, and other microscopic particles that can trigger respiratory issues and allergies. Regular cleaning also helps prevent the buildup of mold and mildew, which can pose significant health risks, particularly for individuals with respiratory sensitivities. Furthermore, a clean environment fosters a sense of calm and order, contributing to a more positive and productive atmosphere within the home or workplace.

Ensuring Security and Resilience

Robust Security Measures

Implementing robust security measures is paramount to safeguarding sensitive supply chain data and preventing unauthorized access. This involves encrypting data both in transit and at rest, utilizing multi-factor authentication for all user accounts, and regularly conducting security audits to identify and address vulnerabilities. These measures not only protect against malicious actors but also ensure compliance with industry regulations and maintain the trust of stakeholders.

Regular security awareness training for all employees involved in the supply chain is also crucial. This training should cover topics such as phishing scams, social engineering tactics, and best practices for handling sensitive information. Empowering employees with the knowledge to recognize and report potential threats is a significant step towards building a more secure and resilient system.

Redundancy and Failover Strategies

To ensure high availability and minimize downtime, implementing redundancy and failover strategies is essential. This involves creating backup systems and data centers to handle peak demand or unexpected outages. Having redundant servers, network connections, and storage solutions allows for seamless failover, ensuring minimal disruption to the supply chain operations.

The criticality of these redundancies extends to the entire supply chain infrastructure, not just individual components. This includes backup power supplies, disaster recovery plans, and provisions for alternative transportation routes in case of unforeseen events.

Disaster Recovery Planning

A comprehensive disaster recovery plan (DRP) is critical for mitigating the impact of unforeseen events such as natural disasters, cyberattacks, or pandemics. The DRP should outline specific procedures for data recovery, system restoration, and business continuity in the event of a disruption. This plan should be regularly tested and updated to ensure its effectiveness in real-world scenarios.

Thorough documentation of all critical systems, processes, and dependencies is a foundational element of a successful DRP. This allows for swift identification and restoration of critical components in the event of a disaster. Regular simulation exercises and drills are also essential to ensure that the plan is well-understood and executable by all relevant personnel.

Data Integrity and Validation

Maintaining data integrity and accuracy throughout the supply chain is vital for making informed decisions and ensuring efficient operations. Implementing robust data validation checks at each stage of the process helps to identify and correct errors early on, reducing the risk of downstream problems. This includes validating data against predefined rules, using checksums, and incorporating data quality checks into automated workflows.

Supply Chain Visibility and Monitoring

Implementing real-time tracking and monitoring tools provides crucial visibility into the entire supply chain. These tools allow for continuous monitoring of inventory levels, shipment statuses, and potential disruptions. Having this level of visibility helps in proactively identifying and addressing potential bottlenecks or issues, enabling rapid response and minimizing delays.

Implementing this visibility also improves the ability to forecast future demand, optimize resource allocation, and streamline overall supply chain operations. Advanced analytics can be applied to these data streams to further enhance predictive capabilities.

Security Training and Awareness

Investing in comprehensive security training and awareness programs is key to building a culture of security within the supply chain organization. This should cover not only technical security measures, but also social engineering, phishing, and other relevant threats. Regular updates and refresher courses are crucial to ensure that employees remain informed about the latest security best practices and threats.

Engaging employees in security awareness programs fosters a proactive approach to security, encouraging them to report suspicious activities and contribute to the overall resilience of the supply chain. Promoting a strong security culture is a critical element in preventing security breaches and maintaining the integrity of the system.