Predictive analytics for optimizing labor scheduling in warehouses

Forecasting Demand and Resource Requirements

Understanding the Importance of Accurate Forecasting

Accurate forecasting of demand and resource requirements is crucial for optimizing any operation, especially in the realm of supply chain management and production. Without a clear understanding of future needs, businesses risk overstocking, leading to wasted resources and financial losses, or understocking, resulting in lost sales and frustrated customers. Forecasting provides a proactive approach that allows businesses to proactively adjust their strategies to meet predicted demand, ultimately maximizing efficiency and profitability.

Predictive analytics allows for a deeper dive into historical data patterns and trends. By identifying these patterns, forecasting models can project future demand more accurately, enabling companies to make informed decisions regarding inventory levels, workforce scheduling, and production capacity. This proactive approach is essential for maintaining a competitive edge in today's dynamic marketplace.

Data Collection and Preparation for Effective Forecasting

The quality of a forecast hinges significantly on the quality of the data used to create the model. This involves meticulously collecting relevant data points from various sources, including sales records, market trends, economic indicators, and even external factors like weather patterns or competitor activities. Thorough data cleaning and preprocessing are equally critical to ensure the data is accurate, consistent, and suitable for analysis.

Data preparation involves transforming raw data into a usable format for forecasting models. This includes handling missing values, identifying outliers, and transforming variables to improve model accuracy. Ensuring data integrity and quality is paramount to obtaining reliable and insightful results from the forecasting process. This is a critical step to building a robust foundation for the forecasting model.

Choosing the Right Forecasting Models

Several forecasting models are available, each with its strengths and weaknesses. The choice of model depends on the specific characteristics of the data and the desired level of accuracy. Time series models, for example, are suitable for predicting future values based on past trends. Regression models, on the other hand, can incorporate multiple variables to predict demand, offering a more nuanced approach. Selecting the appropriate model is a crucial step in ensuring the accuracy and relevance of the forecast.

Understanding the strengths and weaknesses of different approaches is essential for choosing the right model. This involves considering factors such as the data's complexity, the desired level of accuracy, and the computational resources available. The goal is to select a model that accurately captures the underlying patterns in the data and provides reliable predictions for future demand.

Implementing and Monitoring Forecasting Models

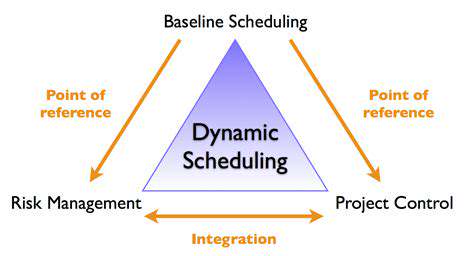

Once a forecasting model is selected and implemented, regular monitoring is crucial for ensuring its continued effectiveness. This involves tracking the model's performance against actual results and making necessary adjustments to maintain accuracy. By closely monitoring the model's output, businesses can identify potential deviations or errors and refine the model accordingly to adapt to changing market conditions.

Integrating Forecasting into Operational Planning

Effective forecasting extends beyond simply generating numbers; it's about seamlessly integrating those predictions into the operational planning process. This includes incorporating the forecast into production schedules, inventory management systems, and workforce planning. By aligning operational activities with the predicted demand, businesses can optimize resource allocation and minimize waste, ultimately enhancing overall efficiency.

Integrating forecasts into existing business processes requires a collaborative approach. This involves aligning the forecasting team with operational teams to ensure data insights are effectively utilized in daily operations. This close collaboration leads to a more streamlined and responsive approach to managing resources and meeting demand.

Utilizing Predictive Analytics for Resource Optimization

Beyond forecasting demand, predictive analytics plays a vital role in optimizing resource allocation. By analyzing various factors influencing resource consumption, such as machine downtime, employee productivity, and material availability, businesses can anticipate potential bottlenecks and proactively adjust resource allocation strategies. This proactive approach to resource management leads to significant cost savings and improved operational efficiency.

Predictive analytics allows for the identification of potential issues before they impact production. This can help prevent costly delays and ensure smooth operations. By leveraging predictive models, companies can optimize their resource utilization and improve overall operational performance.

Improving Accuracy and Reducing Costs

Improving Data Collection Methods

Accurate data collection is fundamental to any successful analysis. Inaccurate data leads to flawed conclusions and ultimately, wasted resources. To improve accuracy, rigorous methodologies must be implemented at every stage, from initial planning to final data validation. This includes clearly defined data collection protocols, standardized data entry procedures, and comprehensive quality control measures. Employing multiple data collection methods, such as surveys and interviews, can also enhance the reliability of the findings, enabling a more holistic understanding of the subject matter. Careful consideration of potential biases and limitations in each method is crucial to ensuring that the data accurately reflects the target population.

A key aspect of improving data collection methods involves training personnel involved in the process. Thorough training ensures consistent data entry and minimizes errors. This includes clear instructions on how to accurately record data, handle ambiguous situations, and recognize potential inconsistencies. Well-trained personnel are essential for maintaining data quality and minimizing human error. Regular audits of data collection procedures are also important to identify and address any weaknesses or inefficiencies in the process. Continuous improvement through feedback and adaptation is key to achieving optimal accuracy.

Optimizing Data Analysis Techniques

While accurate data collection is vital, the effectiveness of the analysis process cannot be overlooked. Sophisticated analytical techniques are essential to uncover meaningful insights and patterns from the collected data. Employing statistical modeling, machine learning algorithms, or data visualization tools can transform raw data into actionable information. Choosing the right analytical approach is critical to extracting relevant and meaningful conclusions from the data. This involves understanding the nature of the data, the research questions, and the limitations of the chosen methods. This understanding is essential for ensuring the reliability and validity of the results.

Careful consideration of potential confounding variables is crucial during the data analysis phase. Such variables can significantly impact the results, potentially leading to inaccurate or misleading conclusions. Therefore, meticulous attention must be paid to controlling for these variables through appropriate statistical methods or experimental designs. This step is critical to ensuring that the observed effects are genuinely attributable to the variables of interest and not to other factors. By controlling for confounding variables, the accuracy and reliability of the analysis are significantly enhanced.

Implementing Robust Quality Control Measures

Implementing robust quality control measures is an indispensable component of any data analysis project. These measures ensure that the collected data is trustworthy and reliable, minimizing the risk of errors and inaccuracies. Regular data validation checks throughout the process, from data entry to final analysis, are critical. This involves scrutinizing the data for inconsistencies, outliers, and potential errors. Utilizing data validation tools and techniques can streamline this process and enhance efficiency.

A crucial element of quality control is the establishment of clear standards and protocols for data handling. These standards should address data entry, storage, and access procedures. Maintaining meticulous records of all data manipulation steps is essential for traceability and reproducibility. This ensures that the entire process can be reviewed and validated, thus contributing to the integrity of the final results. Thorough documentation of the methodology, including any adjustments or revisions, is also important for transparency and reproducibility in future research.

Implementing a robust feedback loop is crucial for continuous improvement in data quality. Feedback mechanisms should be in place to identify and address any shortcomings in the data collection, analysis, or quality control processes. This includes soliciting feedback from participants, reviewers, and stakeholders to gain insights into areas where improvements can be made. Collecting and analyzing feedback ensures that the entire process is optimized for accuracy and reliability. Regular reviews and updates to the protocols are also important to adapt to emerging challenges and ensure the continued effectiveness of the quality control measures.

Read more about Predictive analytics for optimizing labor scheduling in warehouses

Hot Recommendations

- AI for dynamic inventory rebalancing across locations

- Visibility for Cold Chain Management: Ensuring Product Integrity

- The Impact of AR/VR in Supply Chain Training and Simulation

- Natural Language Processing (NLP) for Supply Chain Communication and Documentation

- Risk Assessment: AI & Data Analytics for Supply Chain Vulnerability Identification

- Digital twin for simulating environmental impacts of transportation modes

- AI Powered Autonomous Mobile Robots: Enabling Smarter Warehouses

- Personalizing Logistics: How Supply Chain Technology Enhances Customer Experience

- Computer vision for optimizing packing efficiency

- Predictive analytics: Anticipating disruptions before they hit